HREFLang Management for Multi-CMS Systems

August 13, 2021

Does your CMS Create Correct XML Site Maps?

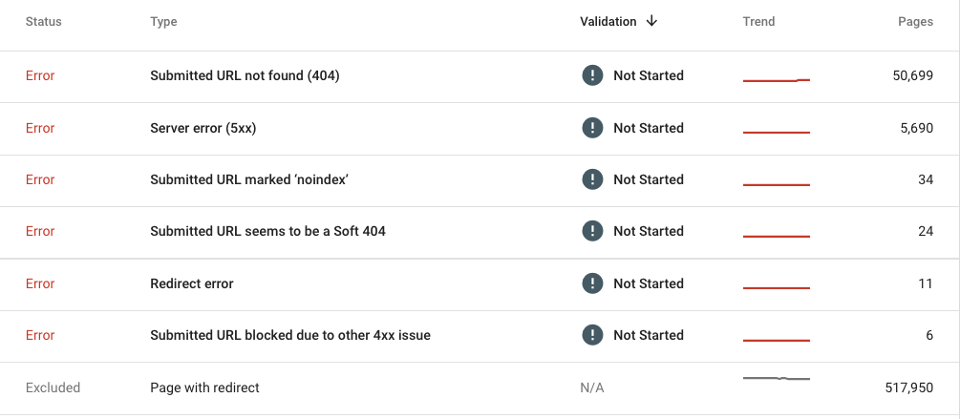

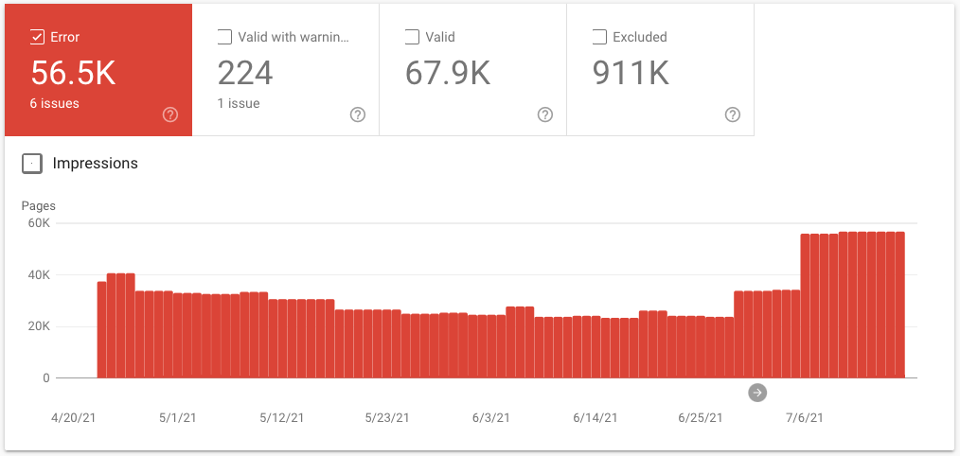

August 26, 2021Google has demonstrated the importance of being indexed and error reduction making multiple sets of information available to site owners in Search Console. The coverage report is a literal to-do checklist of clean up yet few website owners take the time to use it to maximize the global indexation of their websites.

It is so important to ensure you are being crawled and indexed that there are often multiple items on any technical SEO checklist to ensure you are maximizing your “crawl budget.” Unfortunately, few brands actually monitor this critical activity of identifying, fixing, and preventing crawl errors. It is an even bigger problem for multinationals with extended brands, decentralized organizational structures, and multiple agencies.

This article will walk you through a few of the benefits of centrally managing your Search engine console and XML site maps. We will offer a set of best practices for developing protocols for managing and monitoring this critical function across markets and brands.

Benefit 1 – Global view of URL coverage issues & opportunities

The image below is the scorecard of the errors and excluded URLs in the checklist above for a dot com domain of a multinational company. Google colored items in red for a reason. If you have an error list that is nearly the same size as your valid URL list you have a problem. Most of these nearly 57k errors were from Google getting a 500 status. Looking at the indexing report it was clear that crawling almost went to zero as Google did not want to overload the servers. Beyond common sense, Google has even told webmasters that excessive errors can reduce your crawl rates. In this case, the global DevOps team was completely unaware of the problem. Once the problem was detected they were able to fix it immediately. That is a critical point, unless someone is looking at this from a global level they may not be aware of the problems.

Unless you have access to the Google Search Console for each domain you will not be able to see this data. If you are using a global domain like .com and all the markets are represented as folders you should have access to all markets. Unfortunately, many companies use many variations of different domains including branded dot coms and ccTLD’s this often has their own GSC account. Often a bigger challenge is when the GSC accounts are created by local agencies and view that as their asset and won’t give access.

By managing your GSC account centrally with a team email Gmail address it will outlast any individual employee, ensure you always have access, and facilitates the rest of the benefits below. You can give agencies and local market teams access to their market subaccount and revoke it when members change helping keep it a bit more secure. In our next article on the best practices of implementing Centralized Management, we explain how to create and verify the master account.

Benefit 2 – Ensures creation and management of XML site maps

One of the biggest misconceptions we encounter when setting up Hreflang Builder for companies is how many simply assume their CMS system is correctly producing complete and error-free XML site maps. In nearly every case we find significant gaps in the quantity and quality of URLs created by the CMS.

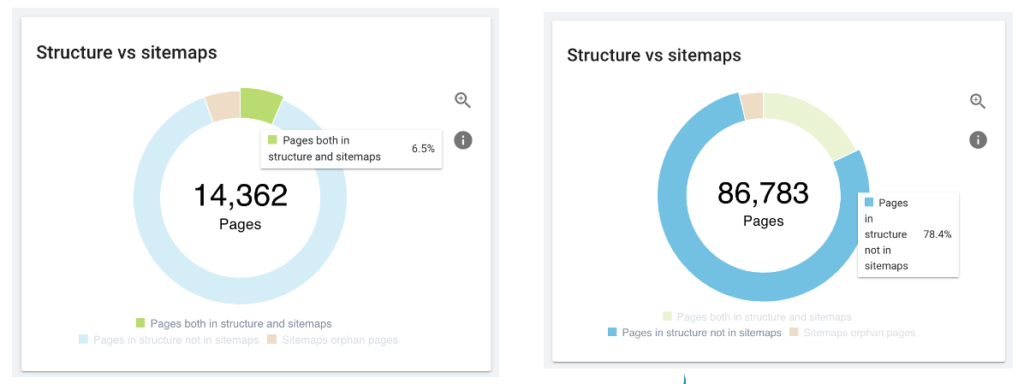

Most of the major SEO diagnostic tools now have reports that will compare URLs found on your site to what is the XML site map. Below is an example of OnCrawl’s Structure vs. XML Site Map report indicating it identified 86k more URLs during its crawl than were in XML site maps. I suggest that you at least run a sample of XML site maps through Screaming Frog will get a sense of the number of errors in the XML.

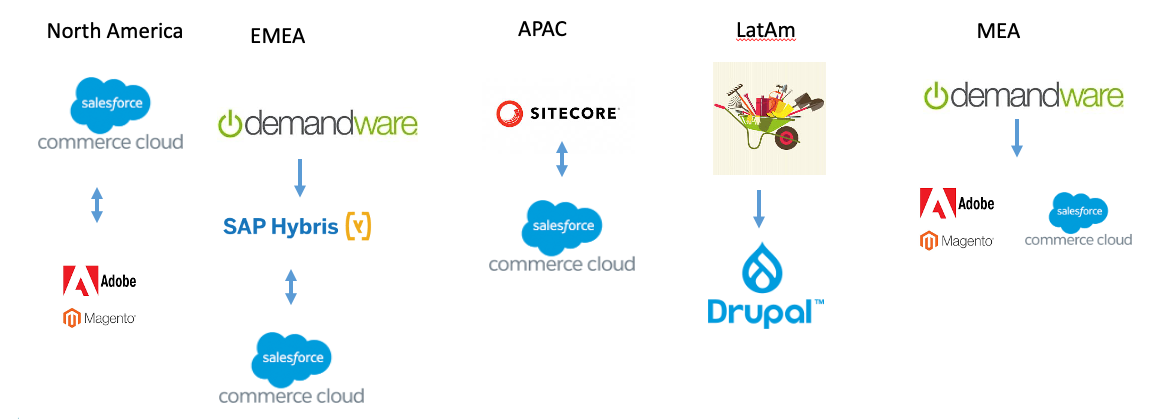

Last year we were engaged by a major consumer brand for one of their products that were suffering significant traffic loss in key markets and unhappy market managers due to the wrong market pages appearing in local search results. In total, they had 187 language/market sites across 18 different Content Management Systems and 15 sites without a CMS. We worked with them to catalog the various market sites for the brand and create a matrix that lists the website, county, language, and where possible XML site map(s). The results of that deep dive were even worse than we expected:

- 76% of the URLs listed in XML site maps redirected or rendered a 404 error making them worthless

- 70% of the market sites did not have xml site maps and most that did were more than a year old

- 62 sites were built with an old CMS that could not create XML site maps

- 45 sites had a Google Search Console setup

- 12 agencies refused to give GSC access

The first step to understanding the breadth and depth of your web ecosystem and the quality of your XML site maps as you build your Market Matrix. The Market Matrix is just as it sounds, a master list of all of your websites including their domain, language, market(s) targeted, and the location of their XML site map(s) and if you have found a GSC account.

Once you have a decent assessment you can decide how to manage the future creation of XML site maps either using your CMS or an SEO diagnostic tool. We recommend you at least submit a list of critical pages for each market via the master GSC account.

Benefit 3 – Manage Google geolocation settings

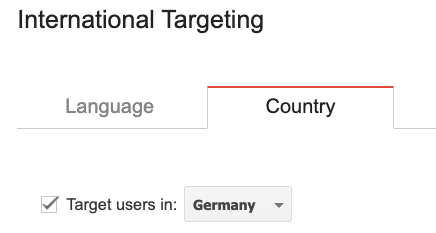

We can argue that centralized management is not needed to set geolocation. You can just as easily manage the location setting in each individual account, and have them verify with a screenshot, or eliminate the need completely by using a ccTLD. Especially if you are using a global top-level domain like com or one of the cool dot io domains it will be important to ensure all of the markets are set correctly. We find the majority of the time geolocation has not been set or is set to an incorrect market.

We often see a problem when someone incorrectly sets a site that is a German-language site that targets all German-speaking markets but someone has set the site to target Germany.

By having all of the domains in the master account it is easier to check and ensure that it is set and not set incorrectly. In our case above, only 45 of 187 sites had GSC accounts leaving all with folders and subdomains without any location settings.

Benefit 4 – Enables centralized XML site map hosting

Let’s take our biggest problem mentioned previously, the 15 sites that cannot create XML site maps. As the CMS cannot create them they would need to be created using a 3rd party tool and manually uploaded to the individual servers for those websites which can be nearly impossible to get done. We had a case where the agency that built the sites went out of business and the local teams did not even have basic access to the websites let alone the ability to upload XML site maps.

By using a custom central XML host and cross-domain verification you can create the XML site maps with any solution you wish and upload them into that domain and Google and submit the location to Google. You can use a unique domain on the same or different host or you can create an S3 Bucket on AWS and proxy the location to a domain or a folder on a primary domain. The location is not really relevant as long as it and all of the included domains are verified in the master GSC account.

We have helped a number of companies use scheduled jobs in Screaming Frog to crawl and build XML site maps that can be imported to that server at any interval you wish. A side benefit of these XML site maps was to import them into their local site search applications where the CMS did not have the ability to update the database.

Benefit 5 – Enables automated creation of HREFLang XML

By unlocking benefit 4, you would be able to leverage one of the major features of HREFlang Builder. Companies have wanted a fully automated solution to build, map and upload HREFLang XML site maps directly to their server without any human intervention. HREFLang Builder can import the XML site maps created by your CMS, URL uploads, or via the APIs for most SEO diagnostic tools then run the required mappings and automatically uploading them to the central server. The Market Matrix helps us align the URLs to the correct domain and output a master XML Index file with links to all of the individual site XML site maps with alternate pages referenced.

If you have any of the complications and complexity mentioned in this article and need a hreflang solution please contact us and we can see if we can create the perfect solution for you.

Challenge 1 – Requires resources to set up correctly

We will be the first to admit that you cannot just snap your fingers and have a fully deployed solution. Often creating the Market Matrix itself is a challenge. In over 20 years in international marketing, only a handful of companies have had a documented list of all of their sites readily available.

To organize, set up and manage and generate the benefits for the long term will take resources. To get it set up may take a few weeks using a variety of different roles contributing to different activities. It is recommended that you make this ongoing activity part of your Search Center of Excellence.

Like everything, you don’t have to solve it overnight. Start with the markets where you have the biggest opportunity, the biggest cannibalization, or even the least resources. One of our clients focused on Latin America and just getting XML site maps updated and submitted to the central domain resulted in a 58% increase in traffic to those markets in 3 months.

Challenge 2 – Local teams & agencies may not want to give up control

In the consumer products project, we immediately ran into strong resistance from local teams and agencies that felt they were losing something by this being managed at the global level. Ironically those that fought the hardest were those with the poorest performance. To be fair, in a few cases the local agencies had indexing and site map improvements on their to-do list in one case or 5 years but due to the local CMS nothing could be done.

We found the key to getting local support was both to have a senior project sponsor as well as demonstrating the benefits to the markets, ensuring no lost capabilities, and reporting the increased traffic and leads. If the markets only have upside and can get support to implement items that have stagnated for a long time they will often be willing participants.

Setting Up your Coverage Center of Excellence

In our next article, we will go into detail about the various steps and best practices for setting up your Coverage Center of Excellence. If you have any questions or want to see how HREFLang Builder can benefit your global performance please reach out.