GSC International Targeting Tool Depreciation

September 13, 2022Understanding Cross-Market SERP Cannibalization

September 28, 2022The purpose of this post is to address a growing problem where Regional Growth Managers double-down on IP detection as a way to decrease shopping cart abandonment. In some regions like Latin America and the Asia Pacific, shopping cart abandonment can be as high as 87%. To reduce this abandonment, they are resorting to more aggressive methods of IP detection and blocking users and, at the same time, blocking Search Engines from accessing the site.

Last week I talked to a CMO of a large eCommerce website that had lost most of its traffic from Latin America and wanted to know if hreflang could help recover the lost sales. The CMO indicated that his DevOps Manager said they did not need hreflang because they are using IP address-based Geo Redirection, and users will “always get to the correct country website making hreflang unnecessary.

For those who are new to both the concept of Geo Redirects and hreflang elements, let me try to define and add context. If you have ever visited another country and tried to visit a website you’ve visited in the past, and ended up on the local language or country version of that site, you have experienced IP or Geo Redirection. Geo Redirection is an automated process that looks up the IP (internet protocol) address of the phone or computer you are on (like a form of caller ID), then based on the physical location of that user’s device, redirects them to a website the company believes is the best match for the visitor based on where they are connected to the internet.

For example, a shopper located in Lima Peru who clicks a link from a blog post to a well-reviewed product would be detected as being both in Lima and Peru and, despite where the link in the blog post pointed, would be redirected to the product page based on the rules the company set for visitors from Peru. The question and problem are what if that visitor from Mountain View, California is Google trying to visit and index the page for this amazing product?

Hreflang elements are directives that tell participating search engines that this page has alternate language versions and when a user uses the search engine from that market to show the local language alternate rather than the version of the page from the website Google originally thought was the most relevant for the query. Google MUST be able to visit the alternate versions for each page to validate the cluster. If it cannot, that pair will be invalidated.

Why is using IP Detection a problem for Search?

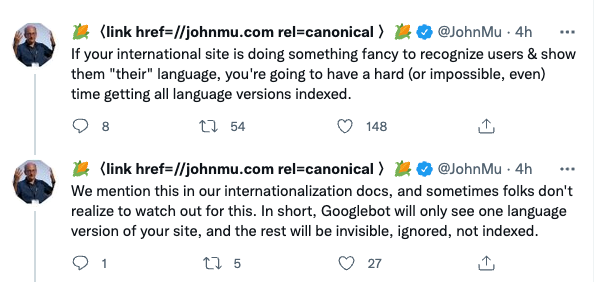

Google just posted a short PSA about the problems with IP detection and getting crawled. John highlights the problem when companies deploy “fancy techniques” to recognize the user’s location and suggests that users review Google’s Multilingual and International Guidelines.

The problem for search engines can be found in the description of the Geo Redirection – a “visitor’s IP address.” When a search engine comes to the website to try to catalog it for scoring and ranking, they are viewed as just another visitor and are sent to the site that matches their location. Search engines tend to crawl from their dominant markets with Google and Bing crawling from the US, Yandex from Russia so they are routed to the site designated for that market, making it near impossible to get the non-US versions of sites when IP detection is deployed.

Let’s use Google as our example. If Google’s Googlebot requests a page from your German, France, or Japan website and the IP detection maps Googlebot’s IP address to the US, the system will redirect Google to the US website. Every time Googlebot (from the US) tries to visit a non-US web page with Geo Redirection, they are sent away, resulting in ONLY the US site getting indexed. If Google cannot index and score the Germany website, then it is impossible to rank for any German language queries.

If Google only indexes the US site, and like many websites, they have the country in the title tag a user from France or Japan may be hesitant to click since they know it is a US site in US Dollars and shipping will be expensive. What if the majority of searchers do not click the link? Working with multinationals in Latin America especially we found that at least 65% of searchers were not clicking into non-local market websites. If the searcher in Peru does actually click on the US listing they will be presently surprised when they have redirected to the companies Peru website. But that is a subset of users that will take the risk. One of our multinational electronics companies deployed Hreflang Builder which updated their IP detection methodology to grant Google an exception resulting in a 200% increase in regional traffic.

The solution to get the best outcome is to leverage Geo Redirection based on IP address but grant an exception in the redirect rules for search engine user agents. If the request for the Australia page comes from a US IP address but also presents the User Agent Googlebot, the search engine is allowed to pass into the Australian website.

This will allow Google to index the pages and score them. If the websites are also using hreflang, Google will see that there is a version for US English, Australian English, and even UK English. Since it not only has all versions, it understands your assignment of the pages to the language markets. It will present the Australian page to searchers in Australia and the US page to those in the United States.

Are Search Engine Exemptions to IP Detection Cloaking?

Strangely, a few GeoTargeting vendors indicate that exempting search engines from IP rules is a form of “cloaking.” Google defines cloaking as giving search engines one page and/or content that is different from regular users. In the video explaining cloaking, Matt Cutts offers a specific example of GeoTargeting and explains is is NOT considered cloaking as long as you are not swapping content.

Let’s think about what is actually happening with Google (search engines) and the exemption from applying the redirection rules. First, we are exempting the search engine user agent from having the rules applied to it. When IP rules are NOT applied then the server returns the page it requested rather than sending it to one specific to its IP. Since Google is requesting a specific page, in many cases they are requesting that page because we asked them to via an XML sitemap. If we apply the IP redirection rules and Google requests a German page from the US they be routed to the US page blocking them from seeing the page they wanted which to me is cloaking.

If you are still concerned that is cloaking, take a look at the top 20 global websites on the web, and you will find they are doing it, and they have not received any sort of penalty for doing it. Again, it is about your intent to deceive Google or the user that crosses the line.

Local Adaptive Page Protocols Confusion

In 2015 Google announced a change in how they crawled and indexed these local adaptive pages. The big change was that they were going to start crawling from various points around the world so if they were set to be IP specific they could reach the local versions of pages. Unfortunately, many consultants and Geo Targeting vendors incorrectly cite this page as to how to manage multinational websites which is not correct. If you have a specific page for Spanish and another unique page for German then you do not have a local adaptive site and the recommendations on this page are not relevant. Below is a direct quote from Google indicating you should have separate URLs for each language version and should leverage hreflang elements.

Note that these new configurations do not alter our recommendation to use separate URLs with

rel=alternate hreflangannotations for each locale. We continue to support and recommend using separate URLs as they are still the best way for users to interact and share your content, and also to maximize indexing and better ranking of all variants of your content.

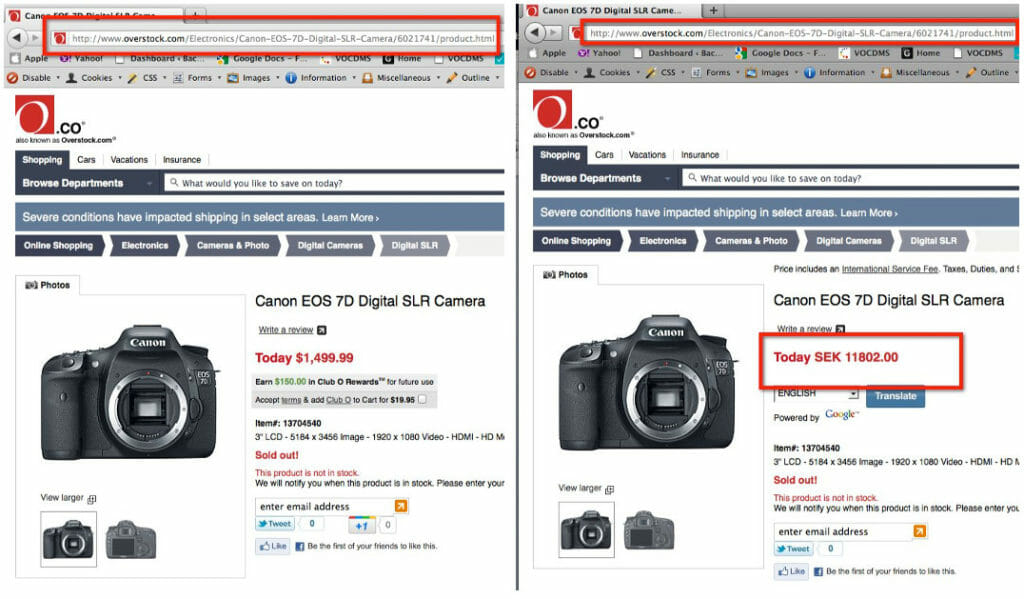

Until about 2020 many e-commerce systems took advantage of Java-based platforms that would swap content based on the location or language preference of the user. For example, the screen capture below shows the experience of a visitor to an Overstock.com page for a specific camera from both an IP in the US (left) and Sweden (right) based on the location the price was in US Dollars and Swedish Kronor but the URL DID NOT change. This meant there was ONLY ONE actual URL for that product but could be represented in multiple languages/currencies. As a result, only the US English version of the page would be indexed. They did not rank well in any other market in the world.

One of the ways that sites have been trying to get around this problem is to append a language or country parameter at the end of the URL. We had to build functionality into Hreflang Builder to accommodate this and in one case their system went nuts and appended every language and country combo to every URL resulting in over 8,000 country and language entries in their Hreflang. In another case, all of these parameter versions were made useless when a DevOps person added a canonical tag for the root URL thereby telling Google not to use any of the local language parameter URLs. Google addressed this problem earlier this year, explaining the challenges for Google and, if you must use it how to use Google’s URL parameter tool. Note: The Google Parameter tool has since been depreciated and Google suggested that sites should use the hreflang element.

How to Correctly Implement IP Detection with HREFLang

To achieve the best results, use Geo Redirection based on IP address but make an exception in the redirect rules to exempt search engine user agents. By granting the exemption, search engines can visit and index all pages no matter where they are crawling from.

Second, and this is the Google recommended way – to use one or more of the suggested methods to indicate the geolocation of a page. By leveraging these methods and a properly implemented hreflang Google will notice and fetch the alternate versions and be more effective at showing the correct version in each market, reducing cannibalization and shopping basket abandonment.

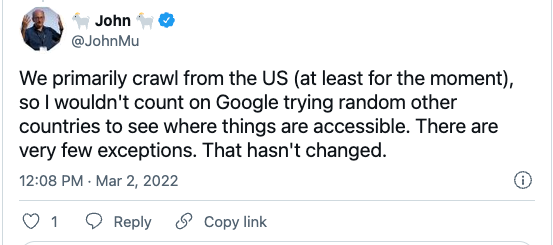

The Myth of Global Crawling

Crawling the web from the far reaches of the world turned out to be far more complex and, I assume more expensive than anticipated, and in 2017 Google doing so did not create an advantage for them so they slowed down its deployment. In March of 2022, Google confirmed that they primarily crawl websites from the United States, making IP Address-based Geo Redirects a challenge.

My own tests and those of others indicate very few visits from non-US Googlebot are coming to large e-commerce websites. Google also suggests you do your own tests by looking up the IP address of the various Googlebot visits to your local sites in their respective log files. Additionally, your IP detection vendor should be able to give you this data, specifically how many requests were from Googlebot to specific pages and/or sections of the website.

<Rant> One such company, when pressed for this analysis for a client, said that would be a privacy violation to give that data and did not want to grant search engines an exemption since they believed Google was crawling from all countries. The good news is the client changes providers to someone who would grant the exception</Rant>